How to Build a CI/CD Pipeline: A Complete Step-by-Step Tutorial

Estimated reading time: 18 minutes

Key Takeaways

- CI/CD pipelines automate software integration and deployment, dramatically reducing manual errors and accelerating release cycles.

- Continuous Integration (CI) is the practice of automatically building and testing code every time a developer commits a change.

- Continuous Deployment (CD) automatically releases validated code to production, eliminating manual deployment steps.

- Essential tools include a source repository like GitHub, a build server like Jenkins or GitHub Actions, and artifact storage like Docker Hub.

- “Pipeline as Code” is a critical best practice where the pipeline configuration (e.g., a `Jenkinsfile` or YAML file) is version-controlled with the application code.

- Securely managing secrets like API keys and passwords using built-in credential stores is non-negotiable for a safe pipeline.

Table of Contents

- The “Why”: Understanding CI/CD Fundamentals

- Gathering Your Tools: Pre-requisites for the Pipeline

- A Practical Continuous Integration Setup with Jenkins and GitHub Actions

- How to Build a CI/CD Pipeline: The Core Steps

- Automating Your Release: The Continuous Deployment Steps

- Making Your Pipeline Robust: Testing, Monitoring, and Feedback

- Pro Tips: Best Practices and Troubleshooting

- Your Journey with CI/CD Has Just Begun

- Frequently Asked Questions (FAQ)

If you’re part of a modern software team, learning how to build a CI/CD pipeline isn’t optional anymore—it’s essential.

You need to ship features faster. You need to maintain quality. And you definitely need to reduce those stressful manual deployments that seem to break at the worst possible moments.

Here’s the good news: A CI/CD pipeline solves all of these problems by automating the entire process of integrating code changes and deploying them to production. It reduces manual errors, accelerates your release cycles, and builds genuine confidence in your software releases.

Let’s break down what we’re talking about:

Continuous Integration (CI) is the practice of automatically building and testing your code every single time a developer pushes changes to a shared repository. No more waiting until Friday to find out that your code doesn’t compile with everyone else’s work.

Continuous Deployment (CD) takes it one step further. Once your code passes all the tests, it automatically releases to your production environment without you lifting a finger.

This CI/CD pipeline tutorial will give you everything you need to understand the fundamentals, set up a practical continuous integration setup, implement the key continuous deployment steps, and see real examples using both a Jenkins pipeline example and GitHub Actions CI/CD to achieve true automated software deployment.

Let’s get started.

The “Why”: Understanding CI/CD Fundamentals

Before we dive into configuration files and build commands, you need to understand what makes CI/CD so valuable.

What is Continuous Integration (CI)?

Continuous Integration is the automated process of merging code changes from multiple developers into a single shared repository—not once a week, but multiple times every single day.

Here’s what happens during CI:

- Automated Builds: Every time someone commits code, the system automatically triggers a build process. This compiles your application and immediately catches any compilation errors. No more “it works on my machine” excuses.

- Automated Unit Tests: After a successful build, a complete suite of automated tests runs against your code. These tests verify that new features work correctly and, just as importantly, that nothing broke existing functionality. This prevents regressions—those frustrating bugs where fixing one thing breaks another.

- Code Quality Checks: Modern CI pipelines integrate static analysis tools and linters that automatically scan your code for style inconsistencies, potential bugs, and security vulnerabilities. Think of them as a second pair of eyes that never gets tired.

The benefits for development teams are significant. CI helps you ship code faster because you’re finding problems immediately rather than during a quarterly QA cycle. You catch defects and bugs much earlier in the development cycle when they’re cheaper and easier to fix. And team collaboration improves because everyone is working against the same, continuously validated codebase.

What is Continuous Deployment (CD)?

Continuous Deployment automates the release of your code to a live production environment after it successfully passes all the CI stages.

Here’s what makes CD powerful:

- Automatic Releases: Once your code is built, tested, and analyzed, it deploys to production automatically—no human intervention required. This is the core of true automated software deployment. Your Friday afternoon manual deployment checklist? Gone.

- Rollback Strategies: Of course, things can still go wrong. That’s why CD includes automated procedures to either revert to a previous stable version (rollback) or quickly fix the issue and deploy a new version (roll-forward). You’re never stuck with a broken production environment.

CD minimizes manual release processes, which means fewer opportunities for human error. That typo in an environment variable? Less likely to happen when it’s automated. And you can deliver new features and bug fixes to your users rapidly and reliably.

How CI and CD Work Together

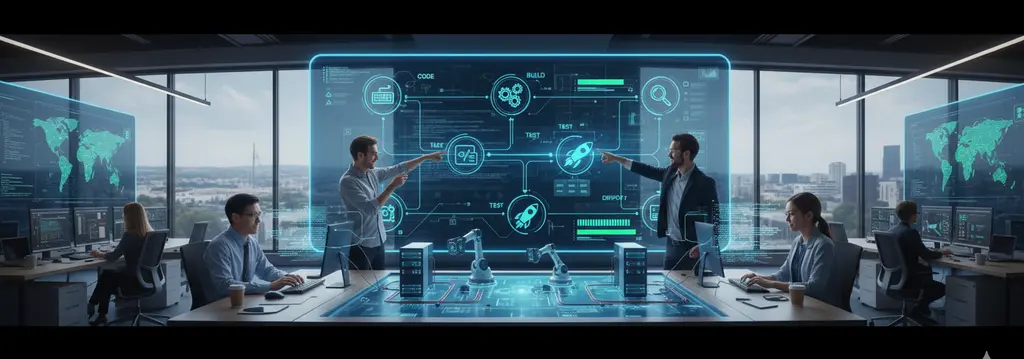

Think of CI and CD as sequential parts of a single assembly line.

CI is the factory floor where your product gets built and tested. CD is the delivery system that automatically ships it to customers.

When a developer pushes code:

- CI immediately builds it

- CI runs all the tests

- CI performs quality checks

- CD packages the validated code

- CD deploys it to production

This combined approach is a cornerstone of modern DevOps culture. It promotes automation throughout your entire workflow, creates fast feedback loops so developers know immediately if something is wrong, and maintains high reliability throughout the entire software delivery lifecycle.

Gathering Your Tools: Pre-requisites for the Pipeline

Before you can build your pipeline, you need the right tools. Here’s what you’ll need to gather.

Source Code Repository

Your pipeline starts with your code, so a version control system is absolutely mandatory.

We’ll use Git for this tutorial. I recommend GitHub specifically because it integrates seamlessly with GitHub Actions CI/CD, which we’ll demonstrate later. That said, other providers like GitLab or Bitbucket work perfectly fine, especially when paired with tools like Jenkins.

The key is having your code in a centralized, version-controlled repository that your build tools can access.

Build Server / CI Tool

This is the “brain” of your operation—the system that runs all your automation scripts.

For this tutorial, we’ll demonstrate two popular tools:

- Jenkins: A powerful, open-source automation server that’s been around forever and is incredibly extensible. We’ll provide a complete Jenkins pipeline example that you can adapt for your needs.

- GitHub Actions: A CI/CD platform built directly into GitHub. If your code is already on GitHub, this is often the fastest way to get started since there’s no separate server to set up.

For context, other popular tools include GitLab CI/CD, CircleCI, and Travis CI. They all accomplish similar goals with slightly different approaches.

Artifact Storage

An “artifact” is the packaged output of your build process. This might be:

- A Docker container image

- A Java

.jarfile - A

.zipfile of your web application assets - An executable binary

You need somewhere to store these artifacts so your deployment process can retrieve them later.

Common options include Docker Hub, AWS ECR (Elastic Container Registry), or Azure Container Registry for container images. For non-containerized applications, you might use AWS S3, Nexus, or Artifactory.

Credentials and Environment Setup

Your pipeline needs secure access to various services—your source code repository, your artifact storage, your production servers.

You’ll need to configure access credentials like API tokens or SSH keys. You’ll also need to ensure your build agents (the machines that actually run your pipeline jobs) are properly configured and can reach the internet or any other necessary resources.

Don’t worry—we’ll cover the secure way to handle credentials later. The key principle: never hardcode secrets in your code.

A Practical Continuous Integration Setup with Jenkins and GitHub Actions

Now we get to the fun part—actually building something. I’ll show you two approaches so you can choose what works best for your situation.

Option 1: The Jenkins Continuous Integration Setup

Getting Jenkins running is straightforward. You can run it via Docker with a single command, or install it using your operating system’s package manager.

Once it’s running, you’ll need to install a few essential plugins through the Jenkins web interface:

- The Git plugin (to pull code from repositories)

- The Pipeline plugin (to define pipelines as code)

Best practices from the start: Use folders to organize your jobs, especially if you’re managing multiple projects. And always store secrets like passwords or API keys in the Jenkins credentials store, never directly in your code or configuration files.

The Jenkinsfile

The heart of your Jenkins pipeline is a file called Jenkinsfile. This is a text file that lives right in your repository alongside your application code. It defines your entire pipeline as code, which means you can version it, review it in pull requests, and track changes over time.

Here’s a complete Jenkins pipeline example with detailed explanations:

// This is a Jenkinsfile that defines a simple CI pipeline.

pipeline {

agent any // Run this pipeline on any available agent

stages {

// Stage 1: Get the source code

stage('Checkout') {

steps {

// 'git' step checks out code from your repository

git 'https://github.com/your-org/your-repo.git'

}

}

// Stage 2: Compile the code

stage('Build') {

steps {

// 'sh' executes a shell command, e.g., 'make' or 'mvn compile'

sh 'make build'

}

}

// Stage 3: Run automated tests

stage('Test') {

steps {

// This runs your test suite, e.g., 'npm test' or 'pytest'

sh 'make test'

}

}

// Stage 4: Save the built application (artifact)

stage('Archive') {

steps {

// This saves files from the 'build/' directory for later use

archiveArtifacts artifacts: 'build/**', fingerprint: true

}

}

}

}Let me break down what each stage does:

- Checkout Stage: This pulls your latest code from the Git repository. Every pipeline run starts fresh with the current state of your code.

- Build Stage: This compiles your application. The actual command depends on your tech stack—it might be

make build,mvn compile,npm run build, ordotnet build. If compilation fails, the pipeline stops here. - Test Stage: This runs your automated test suite. Again, the specific command depends on your framework—could be

make test,npm test,pytest, orgo test. If tests fail, the pipeline stops. - Archive Stage: This saves your build artifacts. The

archiveArtifactscommand stores files so you can download them later or use them in deployment stages.

Option 2: The GitHub Actions CI/CD Setup

If your code lives on GitHub, GitHub Actions offers a simpler setup because there’s no separate server to manage.

GitHub Actions workflows are defined in YAML files that live in the .github/workflows/ directory of your repository. When you push code, GitHub’s servers automatically run your workflows.

Here’s a complete GitHub Actions CI/CD workflow example:

# This workflow is named "CI"

name: CI

# It triggers on any 'push' to the repo or on a 'pull_request'

on: [push, pull_request]

jobs:

# A job named 'build-and-test'

build-and-test:

# It will run on the latest version of Ubuntu provided by GitHub

runs-on: ubuntu-latest

steps:

# Step 1: Check out the code from the repo

- uses: actions/checkout@v2

# Step 2: Run the build command

- run: make build

# Step 3: Run the test command

- run: make testThis workflow is remarkably simple but powerful:

- The Trigger: The

on: [push, pull_request]line means this workflow runs automatically whenever someone pushes code or opens a pull request. You get immediate feedback. - The Environment:

runs-on: ubuntu-latesttells GitHub to run this on a fresh Ubuntu virtual machine. GitHub provides these for free for public repositories. - The Steps: Each step runs sequentially. The

actions/checkout@v2action is a pre-built GitHub Action that checks out your code. Then your build and test commands run just like they would on your local machine.

How to Build a CI/CD Pipeline: The Core Steps

Now that you understand the tools, let’s connect everything into a flowing, automated process. Here’s exactly how to build a CI/CD pipeline from start to finish.

Step 1: Define Your Pipeline Stages

Start by mapping out the logical flow your code needs to follow from commit to production.

Common stages include:

- Build: Compile your code or package your application

- Test: Run unit tests, integration tests, and any other automated checks

- Scan: Perform security scans or code quality analysis

- Package: Create deployment artifacts like Docker images

- Deploy: Push your application to staging or production environments

Your specific application might need more stages or fewer. The key is to think through what needs to happen to get from raw source code to a running application in production.

This logical flow becomes your automated software deployment pipeline.

Step 2: Commit Your Pipeline Configuration to Source Control

Here’s a crucial best practice: your Jenkinsfile or GitHub Actions .yml file should live in your Git repository alongside your application code.

This approach, called “Pipeline as Code,” means your pipeline definition is versioned just like your application code.

You can:

- Track who changed what and when

- Review pipeline changes in pull requests

- Roll back to previous pipeline configurations if something breaks

- Keep your pipeline and application code in sync

Never maintain your pipeline configuration separately from your code. They’re part of the same system and should evolve together.

Step 3: Configure Automated Triggers

Your pipeline should run automatically when developers push code. No one should have to remember to click a “build” button.

For GitHub Actions: This is handled by the on: directive in your YAML file. We already saw on: [push, pull_request], but you can also trigger on specific branches, tags, or even on a schedule.

For Jenkins: You need to configure a webhook. Here’s how:

Go to your GitHub or GitLab repository settings and add a webhook that points to your Jenkins server (usually something like https://your-jenkins-server/github-webhook/). This webhook sends a notification to Jenkins every time code is pushed.

Then in Jenkins, configure your pipeline job to build when it receives this webhook notification.

Once configured, every code push automatically triggers your pipeline. This is where the magic of continuous integration really happens.

Step 4: Validate the CI Pipeline

Time to test your work.

Make a small change to your code—add a comment, update a README, anything. Commit it and push it to your repository.

Then go to your Jenkins dashboard or the “Actions” tab in GitHub and watch the magic happen. You should see:

- Your pipeline trigger automatically

- The checkout stage pull your latest code

- The build stage compile your application

- The test stage run your test suite

If everything works, you’ll see a green checkmark or “success” indicator. That’s your confirmation that the CI part of your pipeline is working correctly.

If something fails, you’ll see exactly which stage failed and can click through to see the detailed logs. This immediate feedback is what makes CI so valuable.

Automating Your Release: The Continuous Deployment Steps

Building and testing code is great, but the real value comes from getting that code into production. Let’s add deployment to your pipeline.

Packaging Artifacts for Deployment

After a successful build, you need to package your application into a deployable artifact.

For containerized applications, this usually means running docker build to create a Docker image and then docker push to upload it to your container registry.

For traditional applications, it might be:

- Running

mvn packageto create a JAR file - Running

npm run buildto create optimized JavaScript bundles - Creating a ZIP archive of your application files

The specific commands depend on a href=”https://codefresh.io/learn/ci-cd-pipelines/”>your technology stack, but the principle is the same: create a clean, versioned artifact that contains everything needed to run your application.

Choosing a Deployment Strategy

Before you automate deployment, you need to decide how to safely roll out changes. Here are the most common strategies:

- Blue/Green Deployment: You maintain two identical production environments—”blue” and “green.” When you deploy a new version, you deploy it to whichever environment isn’t currently serving traffic. Once you confirm the new version is healthy, you switch all user traffic to it. This gives you instant rollback capability—just switch traffic back.

- Canary Deployment: You release the new version to a small subset of users first—maybe 1% or 5%. If no issues appear, you gradually increase the percentage until everyone is on the new version. This limits the blast radius if something goes wrong.

- Rolling Updates: You gradually replace old instances of your application with new ones, a few at a time. Users might hit either version temporarily, but there’s no downtime. This works well with container orchestration systems like Kubernetes.

Each strategy has tradeoffs between complexity, safety, and resource usage. Start simple and get more sophisticated as your needs grow.

Implementing the Deployment Stage

Now you add deployment to your pipeline configuration.

In a Jenkinsfile: Add a new stage after your test stage:

stage('Deploy') {

steps {

sh 'docker push your-registry/your-app:latest'

sh 'ssh your-server "docker pull your-registry/your-app:latest && docker restart your-app"'

}

}This pushes your Docker image to a registry and then connects to your server to pull and restart the application.

In GitHub Actions CI/CD: Add a new job or step that handles deployment:

- name: Deploy to Production

run: |

echo "${{ secrets.DEPLOY_KEY }}" | ssh-add -

ssh user@your-server 'cd /app && git pull && systemctl restart your-app'The specifics vary wildly depending on where you’re deploying—AWS, Azure, Google Cloud, your own servers. But the pattern is the same: authenticate, transfer the artifact, and restart or update the running application.

Handling Secrets Securely

This is critical: deployment keys, database passwords, API tokens, and other secrets must NEVER be stored in plain text in your pipeline files.

If you put secrets in your repository, even a private one, you’re asking for trouble. People leave companies, repositories get accidentally made public, and attackers specifically search for exposed credentials.

For Jenkins: Use the Jenkins Credentials system. Add your secrets through the Jenkins UI, then reference them in your Jenkinsfile:

environment {

API_KEY = credentials('my-api-key-id')

}For GitHub Actions: Use Encrypted Secrets. Add them in your repository settings under “Secrets and variables,” then reference them in your workflow:

env:

API_KEY: ${{ secrets.API_KEY }}Both systems securely store your secrets encrypted at rest and only inject them into the build environment when the pipeline runs. The secrets never appear in logs or are exposed to anyone without the proper permissions.

Making Your Pipeline Robust: Testing, Monitoring, and Feedback

A basic pipeline that builds, tests, and deploys is a great start. But production-ready pipelines need more sophisticated capabilities.

Advanced Automated Testing

Unit tests are essential, but they’re not enough for a robust pipeline.

- Integration Tests: These test how different parts of your application work together. Does your API correctly interact with the database? Do your microservices communicate properly? Integration tests catch issues that unit tests miss because they test real interactions.

- End-to-End (E2E) Tests: These test your entire application from a user’s perspective. They might use tools like Selenium or Cypress to actually click through your web interface and verify that complete user workflows function correctly.

Add these to your pipeline by running them against a deployed instance in a staging environment. This catches issues before they reach production.

Integrating Security and Code Analysis

Security shouldn’t be an afterthought. Integrate security scanning directly into your pipeline.

- Security Scanning: Tools like Snyk, Trivy, or Anchore can scan your Docker images or dependencies for known vulnerabilities. Add a pipeline stage that fails the build if critical vulnerabilities are detected.

- Code Quality Analysis: Tools like SonarQube perform deep analysis of your code, detecting code smells, complexity issues, and potential bugs. They can enforce quality gates that prevent low-quality code from reaching production.

This practice of integrating security into your CI/CD pipeline is often called DevSecOps—making security everyone’s responsibility and catching issues early.

Setting Up Notifications

Fast feedback loops are crucial for effective CI/CD.

Configure your pipeline to send notifications when builds or deployments complete—especially when they fail.

Most teams use:

- Slack or Microsoft Teams: Send a message to a dedicated channel with build status, who triggered it, and links to logs

- Email: For longer-form notifications or when team members aren’t actively monitoring chat

- SMS or PagerDuty: For critical production deployment failures that need immediate attention

The goal is ensuring the right people know about issues immediately, not hours later when they happen to check the dashboard.

Tracking Key Metrics

You can’t improve what you don’t measure. Track these key metrics to understand your pipeline’s health and efficiency:

- Build Duration: How long does it take from code commit to completed build? If this grows too long, developers lose patience and the feedback loop slows down.

- Deployment Frequency: How often do you deploy to production? Higher frequency generally indicates a healthier, more confident team.

- Change Failure Rate: What percentage of deployments cause issues that require a rollback or hotfix? This measures the quality of your testing and deployment process.

- Mean Time to Recovery: When something does break in production, how quickly can you recover? Fast recovery matters more than never failing.

These metrics, often called DORA metrics, give you objective data about your software delivery performance.

Pro Tips: Best Practices and Troubleshooting

Let me share some hard-won lessons that will save you time and frustration.

Best Practices

Keep Pipelines Modular & DRY (Don’t Repeat Yourself)

If you find yourself copy-pasting the same pipeline stages across multiple projects, create reusable templates or scripts instead.

In Jenkins, use Shared Libraries to define common functions. In GitHub Actions, create reusable workflows or composite actions. This makes your pipelines easier to maintain and ensures consistency across projects.

Version Control Your Pipeline

We mentioned this before, but it bears repeating: your Jenkinsfile or workflow YAML must live in Git.

This lets you track all pipeline changes, understand why something was changed, and roll back if a pipeline modification breaks things. Treat your pipeline configuration with the same care you treat your application code.

Use Parallel Stages

If you have independent jobs—like running different test suites or building for multiple platforms—run them in parallel rather than sequentially.

In Jenkins:

stage('Parallel Tests') {

parallel {

stage('Unit Tests') {

steps { sh 'npm run test:unit' }

}

stage('Integration Tests') {

steps { sh 'npm run test:integration' }

}

}

}In GitHub Actions:

strategy:

matrix:

node: [14, 16, 18]This can dramatically reduce total pipeline execution time, giving developers faster feedback.

Common Pitfalls and How to Fix Them

Problem: Hardcoded Secrets

You’ve committed database passwords or API keys directly in your Jenkinsfile or workflow YAML.

Solution: Immediately rotate those credentials (they’re now compromised) and use the proper credential stores. For Jenkins, use the Jenkins Credentials system. For GitHub Actions, use Encrypted Secrets. Never, ever commit secrets to version control.

Problem: Flaky Tests

Your pipeline randomly fails because some tests are unreliable—they pass sometimes and fail other times with the same code.

Solution: Flaky tests destroy confidence in your pipeline. Isolate and debug these tests. Often they have timing issues or depend on external services that are unreliable. Fix or delete flaky tests. A pipeline is only as reliable as its weakest test.

Problem: Overly Complex Initial Setup

You’re trying to implement blue/green deployments, canary releases, security scanning, and multi-region deployments all in your first pipeline.

Solution: Start simple. Get a basic build-and-test pipeline working first. Then incrementally add deployment. Then add security scanning. Then optimize deployment strategies. Trying to do everything at once leads to frustration and abandoned projects. Walk before you run.

Your Journey with CI/CD Has Just Begun

Congratulations—you now understand how to build a CI/CD pipeline from scratch.

We’ve covered the fundamentals of continuous integration and deployment, walked through practical continuous integration setup with both Jenkins and GitHub Actions, implemented the key continuous deployment steps, and explored best practices for testing, monitoring, and troubleshooting your pipeline.

You’ve seen working examples of a Jenkins pipeline example and GitHub Actions CI/CD configuration that you can adapt for your own projects. You understand how to achieve true automated software deployment that reduces manual errors and accelerates your release cycles.

But this is just the beginning of what’s possible.

As you gain confidence, explore more advanced features:

- Matrix Builds: Run your tests across multiple versions of your programming language, multiple operating systems, or multiple database versions simultaneously. This catches compatibility issues early.

- Self-Hosted Runners: Instead of using GitHub’s or Jenkins’ provided build agents, run your own. This gives you more control, potentially better performance, and the ability to access internal resources during builds.

- Advanced Deployment Strategies: Experiment with sophisticated patterns like feature flags (deploying code that’s conditionally enabled) or progressive delivery (gradually rolling out features based on user attributes).

The official documentation for Jenkins and GitHub Actions is excellent and worth exploring in depth. Study advanced automated software deployment strategies used by companies like Netflix, Amazon, and Google to understand how CI/CD scales to massive systems.

The software industry has learned that manual processes don’t scale. Automation, testing, and continuous delivery are no longer competitive advantages—they’re basic requirements for modern software teams.

Your pipeline will evolve as your needs grow. That’s expected and healthy. The key is to start now, iterate constantly, and always be improving your software delivery process.

Now go build something great.

Frequently Asked Questions (FAQ)

1. What is the main difference between Continuous Integration and Continuous Deployment?

Continuous Integration (CI) focuses on automating the build and testing of code every time a change is pushed to the repository. Its goal is to verify code quality and integration early. Continuous Deployment (CD) is the next step: it automatically deploys the code to production after it passes all CI stages. In short, CI verifies the code, and CD releases it.

2. Do I need to use Jenkins to build a CI/CD pipeline?

No. While Jenkins is a very powerful and popular open-source tool, many alternatives exist. GitHub Actions is a great choice if your code is on GitHub, as it’s tightly integrated. Other options include GitLab CI/CD, CircleCI, Travis CI, and cloud-native services from AWS, Google Cloud, and Azure. The best tool depends on your specific needs, existing ecosystem, and team expertise.

3. Why is “Pipeline as Code” so important?

“Pipeline as Code” means storing your pipeline’s configuration (like a `Jenkinsfile` or a GitHub Actions YAML file) in your version control system alongside your application code. This is critical because it makes your pipeline versioned, reviewable, and repeatable. You can track changes, review pipeline modifications in pull requests, and easily restore a previous working configuration if something breaks.

4. What is the biggest mistake to avoid when building a CI/CD pipeline?

One of the biggest and most dangerous mistakes is hardcoding secrets (like API keys, passwords, or SSH keys) directly into your pipeline configuration files. This exposes sensitive credentials in your source code repository, creating a massive security risk. Always use the built-in credential management systems provided by your CI/CD tool, such as Jenkins Credentials or GitHub Encrypted Secrets.

5. How can I make my pipeline faster?

Slow pipelines kill developer productivity. To speed them up, focus on a few key areas: run jobs in parallel (e.g., run unit tests and integration tests at the same time), use caching for dependencies to avoid re-downloading them on every run, optimize your build and test scripts, and ensure your build agents have sufficient resources (CPU/RAM).